Every interaction with a large language model begins long before any neural network computation. It starts with tokenization — the process of converting human language into the numerical representations that models actually process. Understanding tokenizers unlocks a deeper understanding of why LLMs behave the way they do.

What is Tokenization?

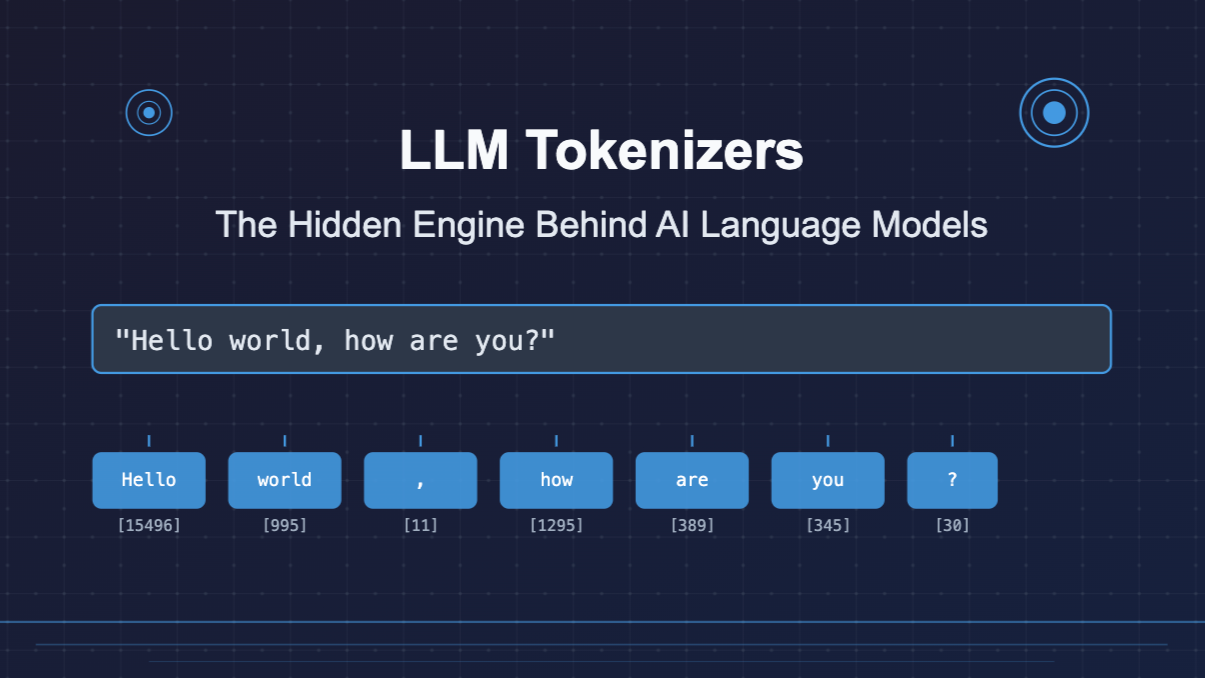

Tokenization transforms text into discrete units called tokens — numerical representations the model can process. Rather than understanding words directly, models convert input into token IDs corresponding to entries in a vocabulary.

“Hello, world!” might become [15496, 11, 995, 0] — four numbers that the model processes as a sequence. The model never sees the original text; it only sees tokens.

Why Tokenization Matters

The choice of tokenizer has profound downstream effects:

- Context window capacity — the maximum text a model can process is measured in tokens, not words or characters

- API costs — most LLM APIs charge per token; tokenization efficiency directly affects cost

- Cross-lingual performance — languages with inefficient tokenization get fewer “thoughts” per context window

- Specialised content — code, mathematical notation, and domain-specific text tokenize very differently than prose

Three Main Tokenization Approaches

1. Word-Based Tokenization

The system scans text character-by-character, treating delimiters (spaces, punctuation) as token boundaries. “Hello world!” becomes individual word units.

Advantages:

- Intuitive and semantically meaningful

- Simple to implement

- Efficient for common vocabulary

Limitations:

- Massive vocabularies for morphologically rich languages

- Out-of-vocabulary (OOV) problems for rare or new words

- Compound word challenges (German, Finnish, Turkish suffer most)

2. Character-Based Tokenization

Each character becomes a token. “Hello world” breaks into 11 tokens: H-e-l-l-o-[space]-w-o-r-l-d.

Advantages:

- Tiny vocabulary (100–200 tokens covers most languages)

- Eliminates unknown word problems entirely

Trade-offs:

- Sequences 5–10× longer than word-based

- The model must reconstruct semantic meaning from individual characters

- Higher computational cost for the same amount of text

3. Subword Tokenization (The Modern Standard)

Modern LLMs — GPT, Claude, Llama, Gemini — all use subword tokenization. Words are split into meaningful units: “Unlikeliest” becomes ["Un", "likely", "est"].

The dominant algorithm is Byte-Pair Encoding (BPE):

- Start with a vocabulary of individual characters

- Count the frequency of all adjacent token pairs

- Merge the most common pair into a new token

- Repeat until reaching the target vocabulary size (typically 10,000–100,000 tokens)

The result: common words become single tokens, rare words get split into recognisable subword units, and the vocabulary is compact enough to be manageable.

Impact on Model Performance

Economic Implications

Tokenization efficiency has real cost consequences:

- Training efficiency improves with optimised tokenization

- Fine-tuning costs scale directly with tokens per training example

- Inference latency varies based on how a given input tokenizes

A well-structured prompt in English might use significantly fewer tokens than an equivalent prompt in another language — directly affecting both cost and available reasoning capacity.

Cross-Lingual Disparities

This is one of the most significant fairness issues in modern LLMs. Languages with less efficient tokenization:

- Get fewer reasoning steps within a fixed context window

- Cost more per equivalent amount of text

- May have lower model quality due to less training data representation

English typically tokenizes very efficiently in models trained primarily on English text. Languages like Thai, Arabic, or many African languages often require significantly more tokens for equivalent content.

Technical Effects on Model Reasoning

- Attention dilution — when semantic units are spread across many tokens, the model’s attention mechanism must work harder to connect related concepts

- Boundary artifacts — token boundaries that misalign with semantic units can affect how the model processes meaning

- Embedding geometry — the choice of tokens shapes the internal representation of concepts in the model’s embedding space

Practical Optimisation Strategies

For prompt engineering:

- Structure prompts with awareness of how your target language tokenizes

- Use tokenizer visualisation tools (like OpenAI’s tokenizer playground) to inspect token boundaries

- Prefer structured formats (JSON, markdown) that often tokenize efficiently

For data preparation:

- Select formats based on tokenization performance for your use case

- Apply semantic compression — preserve meaning with fewer tokens where possible

- Be aware that code tokenizes very differently than prose

The Future of Tokenization

Emerging approaches are addressing current limitations:

- Character-level fallbacks for rare words and multilingual content

- Learned tokenizers that adapt during pre-training

- Semantic tokenization that incorporates meaning-based rather than purely frequency-based boundaries

- Byte-level models that operate directly on raw bytes, eliminating the tokenization step entirely

Key Takeaway

Tokenization forms the crucial bridge between human language and machine understanding. It shapes everything from API costs to model reasoning depth to cross-lingual fairness — yet it’s almost entirely invisible to most users.

Understanding how your text becomes tokens isn’t just an academic exercise. It directly informs better prompt design, more accurate cost estimation, and a clearer mental model of why LLMs behave the way they do.